Just now, DeepSeek’s GitHub started updating frequently. It launched and open-sourced a new repository, Tile Kernels, and at the same time updated the DeepEP repository, bringing DeepEP V2 online. It has been less than a week since DeepSeek quietly updated Mega MoE and FP4 Indexer last time.

DeepSeek Tile Kernels

Link: https://github.com/deepseek-ai/TileKernels

According to the introduction, Tile Kernels are GPU kernels optimized for LLM operations, built with TileLang. TileLang is a domain-specific language for expressing high-performance GPU kernels in Python, with characteristics such as easy portability, agile development, and automatic optimization.

The performance of Tile Kernels is extremely strong. As DeepSeek itself wrote: “Most kernels in this project are already close to the hardware performance limit in terms of compute intensity and memory bandwidth. Some of them have already been used internally in training and inference scenarios. However, they do not yet represent best practices, and we are continuing to improve the code quality and documentation.”

There is not much introductory information in the repository, yet between the lines it already “spoils” the underlying architectural innovation path of DeepSeek’s next-generation models, signaling a leap comparable to the recent Hy3 preview launch.

DeepSeek Tile Kernels Features

Here are some specific features of Tile Kernels:

Gating mechanism: Top-k expert selection and scoring for MoE routing

MoE routing: Mapping tokens to experts, fused expand/reduce, and weight normalization

Quantization: Supports FP8/FP4/E5M6 conversion in per-token, per-block, and per-channel modes, and fuses SwiGLU + quantization operations

Transpose: Batched transpose operations

Engram: Engram gating kernels, fusing RMSNorm, forward/backward propagation, and weight-gradient reduction

Manifold HyperConnection: Hyper-connection kernels, including Sinkhorn normalization and split/apply for mix

Modeling: High-level torch.autograd.Function wrappers that combine the underlying kernels into trainable layers (engram gate, mHC pipeline)

DeepSeek EPv2: Faster EP With Engram, PP, and CP Support

EPv2 link: https://github.com/deepseek-ai/DeepEP/pull/605

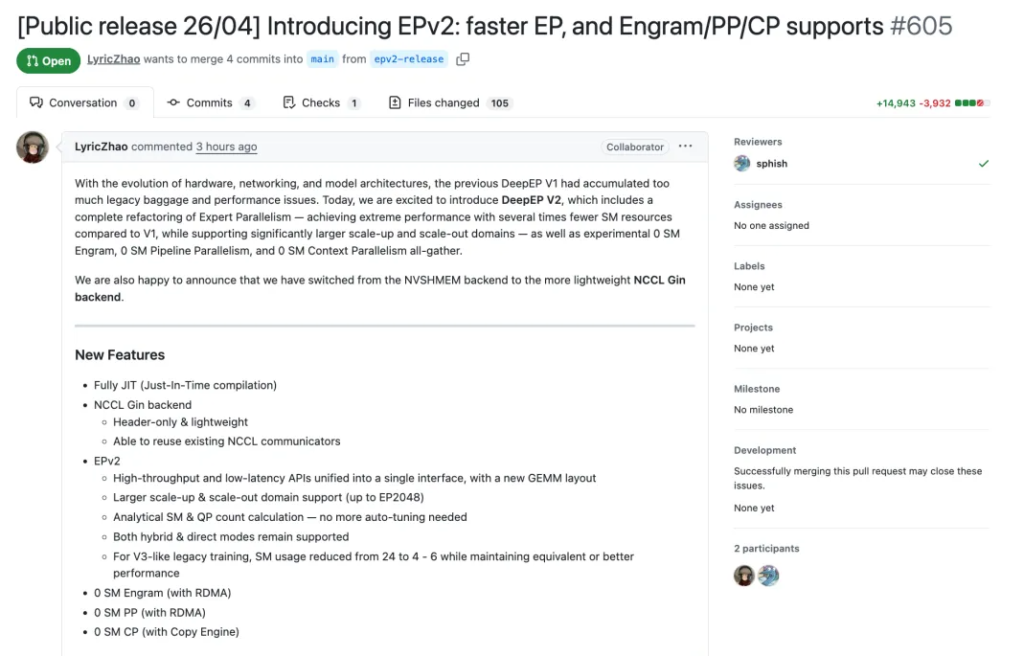

Earlier today, DeepSeek also released the latest version of EPv2, delivering faster expert parallelism (EP) and support for Engram / pipeline parallelism (PP) / context parallelism (CP).

As hardware, networks, and model architectures have evolved alongside rapid industry releases like Qwen 3.6, DeepSeek’s earlier DeepEP V1 had already accumulated too much historical baggage and too many performance issues.

This update completely restructures Expert Parallelism. Compared with V1, it only needs a fraction of the SM resources to reach extreme performance, while also supporting larger-scale scale-up (within a single machine) and scale-out (across machines).

In addition, DeepSeek introduced an experimental 0 SM series in this update, including 0 SM Engram, 0 SM pipeline parallelism (PP), and 0 SM context parallelism (CP) All-gather operators. At the same time, the backend has been switched from NVSHMEM to the lighter NCCL Gin backend.

New Features in DeepSeek DeepEP V2

Here are some of the new features in DeepEP V2:

Fully JIT

NCCL Gin backend:

Header-only, extremely lightweight

Able to reuse existing NCCL communicators

EPv2:

Unifies high-throughput and low-latency APIs into a single interface, and adopts a brand-new GEMM layout

Supports larger scaling domains, up to EP2048

Introduces analytical SM and QP count calculation, so auto-tuning is no longer needed

Continues to support both Hybrid mode and Direct mode

For older V3-like training tasks, SM usage drops from 24 to 4–6, while maintaining the same or even better performance

0 SM Engram (with RDMA)

0 SM PP (with RDMA)

0 SM CP (with Copy Engine)

DeepSeek DeepEP V2 Performance

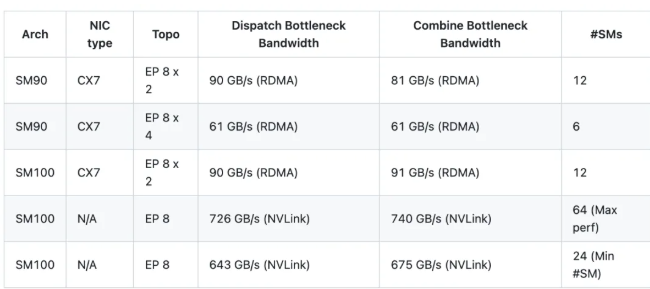

Following the configuration of DeepSeek-V3, tests were run under the new version with settings of 8K tokens per batch, 7168 hidden dimension, Top-8 experts, FP8 dispatch, and BF16 combine. The results are as follows:

Note: The results shown are logical bandwidth. For example, in the case of EP 8 x 2, the 90 GB/s bandwidth actually includes traffic between local GPUs (local ranks).

Compared with V1, V2 achieves up to 1.3× peak performance, while saving as much as 4× SM resource usage —a crucial optimization for staying competitive in a landscape dominated by heavyweights like Claude Opus 4.7.

Finally, just a bit of advice for DeepSeek: hurry up and release V4 already. Everyone is getting impatient.