The recent OpenAI Codex model leak was not a breach of model weights or data—it was a UI-level exposure that briefly revealed internal model names like GPT-5.5, Arcanine, and Glacier-alpha. Based on aggregated Reddit discussions and real user observations, this incident strongly suggests that OpenAI is actively testing multiple next-generation models behind the scenes, particularly focused on agent-based workflows, long-context memory, and advanced reasoning systems.

What Exactly Happened in the OpenAI Codex Leak?

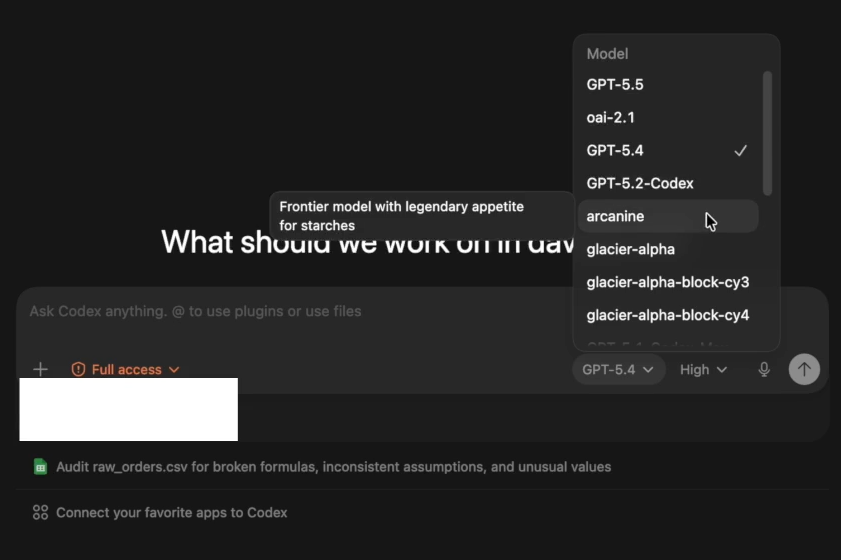

The leak originated from a Reddit post where a user captured a model selection dropdown inside Codex that temporarily exposed internal model names not publicly announced.

Key Observations from the Leak

- The interface displayed:

- GPT-5.5

- Arcanine

- Glacier-alpha

- The issue was quickly patched, indicating:

- It was not intentional

- It likely came from a staging or internal testing environment

Why This Matters

From an engineering perspective, UI leaks like this typically happen when:

- Internal models are connected to production interfaces

- Feature flags or access controls fail temporarily

This aligns with past patterns in AI tooling leaks, where product-layer exposure—not model-level compromise—reveals roadmap signals.

What Do GPT-5.5, Arcanine, and Glacier-Alpha Likely Represent?

Based on user analysis patterns across Reddit and historical naming conventions, we can infer likely roles for each model.

GPT-5.5: Incremental Evolution, Not a Breakthrough

Users widely interpret GPT-5.5 as:

- A mid-cycle upgrade to GPT-5.4

- Focused on:

- Better reasoning stability

- Improved agent execution

- Lower hallucination rates

This matches how OpenAI has iterated historically (e.g., GPT-4 → 4.5 → 4.1 variants).

Arcanine: A Potential Agent-Oriented Model

The name “Arcanine” triggered strong speculation due to its distinct naming style.

User pattern analysis suggests:

- It may represent:

- An agent execution model

- Optimized for:

- Tool usage

- Multi-step workflows

- Autonomous task completion

This aligns with the rapid shift toward AI agents replacing static chat models.

Glacier-Alpha: Long Context or Memory System

“Glacier” consistently appeared in discussions related to:

- Persistent memory

- Long-context reasoning

- Knowledge storage

Users hypothesize it could be:

- A memory-augmented model

- Or a backend system enabling:

- Cross-session recall

- Long-form task continuity

This fits directly into the industry-wide push toward stateful AI systems.

Why Reddit Users Believe This Leak Is a Real Roadmap Signal

Across multiple threads, a clear consensus emerged:

This was not random—it reflects real internal model development.

Supporting Evidence from User Analysis

- Consistent Naming Patterns

- Internal codenames often differ from public releases

- Matches prior leaks in AI tooling ecosystems

- Timing with Codex Updates

- Codex recently expanded capabilities:

- Multi-step task execution

- System-level interaction

- Memory handling

- Codex recently expanded capabilities:

- Immediate Patch Response

- Suggests:

- Exposure was unintended

- But the models are real and active internally

- Suggests:

Codex Is No Longer Just a Coding Tool—It’s Becoming an Agent System

The most important insight from this event is not the model names—it’s what they imply.

Shift Observed in Real Usage

Based on user testing and community feedback:

Codex is evolving into:

- A system that can:

- Execute workflows

- Interact with tools

- Maintain context across tasks

Real-World Behavior Change

Users report:

- More persistent task execution

- Better handling of multi-step prompts

- Early signs of autonomy in workflows

This supports the idea that:

Codex is transitioning from a “code generator” to an AI operating layer for task execution

Why Leaks Like This Are Increasing (And Why They Matter)

This is not an isolated event.

Pattern Across AI Ecosystem

Recent leaks tend to originate from:

- UI components

- Tooling integrations

- Feature rollouts

Not from:

- Model weights

- Training data

What This Means Strategically

For advanced users and developers:

- Leaks are becoming:

- A signal of internal experimentation

- A way to detect future product direction early

- They often reveal:

- Capabilities before official announcements

- Shifts in architecture (e.g., agents, memory systems)

The Open-Source Acceleration Effect: From Leak to Ecosystem Growth

One of the most overlooked consequences of this leak is how quickly it triggered open-source activity.

Observed Trend

- Developers began:

- Reverse-engineering potential capabilities

- Building alternative tools, similar to the rapid community scaling seen when OpenClaw hits 160k.

- Projects inspired by similar leaks have:

- Rapidly gained traction

- Attracted thousands of contributors

Practical Impact

This creates a feedback loop:

- Leak exposes capability direction

- Developers replicate or experiment

- Ecosystem innovation accelerates

This dynamic is now a core driver of AI progress outside major labs.

User Trust, Transparency, and the “Hidden Model” Debate

A recurring concern across discussions is transparency.

Core User Concern

Many users believe:

- The publicly available models are:

- Only a subset of what exists internally

Typical sentiment:

- “There are better models internally than what we can access”

Why This Matters

This impacts:

- Trust in AI providers

- Expectations for performance

- Adoption decisions in enterprise settings

What This Leak Tells Us About the Future of AI

When we connect all signals, a clear direction emerges.

1. Rapid Iteration Beyond Public Releases

OpenAI is likely:

- Running multiple model variants simultaneously

- Testing them in controlled environments

2. Agents Will Replace Traditional Chat Interfaces

The presence of specialized models suggests:

- Separation of roles:

- Reasoning

- Memory

- Execution

This is the foundation of true AI agents.

3. Memory Will Become a Core Layer

Models like Glacier-alpha indicate:

- Persistent context is becoming:

- A first-class feature

- Not just an add-on

Final Takeaway

The OpenAI Codex model leak was not a security failure—it was a rare glimpse into how modern AI systems are built behind the scenes.

What it revealed is far more important than the leak itself:

- OpenAI is actively developing multi-model systems

- Codex is evolving into a full agent platform

- The future of AI is shifting toward:

- Autonomy

- Memory

- System-level execution

For anyone building, investing in, or optimizing for AI, this is not just news—it’s a directional signal of where the entire industry is heading next.