the long-awaited happyhorse — HappyHorse-1.0 — has officially entered grey-scale testing. For anyone who has been closely watching the AI video space over the past three weeks, this name is definitely not unfamiliar.

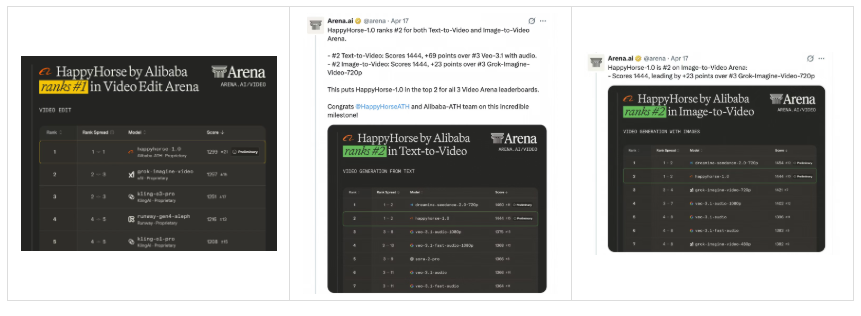

On April 7, an anonymous mysterious model quietly appeared on the Video Arena leaderboard of the authoritative platform Artificial Analysis. After three days of speculation across the internet, Alibaba suddenly stepped forward and officially “claimed” the model. With an Elo score of 1299, it ranked first in video editing on the Arena leaderboard, while placing second in both text-to-video and image-to-video categories.

Now, this black horse that stunned the global AI community has finally stepped out from behind the curtain. Starting today, professional creators and enterprise clients worldwide can register and use it on the HappyHorse official website (www.happyhorse.cn) and Alibaba Cloud Bailian platform, while general users can experience it via the Qwen App.

HappyHorse Cinematic Quality: Not Just Built on Filters

After hands-on testing, we found that happyhorse performs exceptionally well in mid-to-close shots.

Its instruction-following ability is extremely strong, and when it comes to capturing human emotion, it really delivers. We asked it to generate a Japanese drama-style clip in the style of Shunji Iwai: a campus boy and girl, with a youthful feeling, cicada sounds, a hazy sense of memory, overexposed soft lighting, backlit shots, slow motion, and shallow depth of field.

The moment you see the video, it genuinely feels different from previous AI-generated videos. Especially in handling large apertures and shallow depth of field, the separation between subject and background is natural and delicate. Mid-close shots feel breathable, full of emotional tension. The control of overexposure and soft light is restrained, capturing that Iwai aesthetic quite precisely.

It can be said that this kind of complete narrative rhythm and emotional layering finally brings “cinematic AI video” to life.

Then, we had it generate a suspense-style movie trailer using five emotional shots. The result is already close to film industry standards. There’s no fragmented feel like short videos — instead, it builds suspense and emotional tension through steady pacing and progression, making both storytelling and emotional expression much closer to real cinematic language.

Emotional Expression and Character Performance in HappyHorse

The Korean girl group MV it generated is layered, stylish, and visually impactful, with both emotional and stylistic expression — more like a real commercial music video.

Of course, happyhorse still can’t completely escape the flaws of AI video models. For example, in the final frames, two members of the group suddenly became identical.

Recently, people have been using ChatGPT to revive old photos from the 90s. When combined with HappyHorse-1.0, it feels like a perfect finishing touch — faded memories instantly come alive, vivid and lifelike.

Overall, whether it’s dialogue-heavy emotional scenes or high-intensity action sequences, it can maintain impressive consistency and logic across 15 seconds of multi-shot transitions. This is exactly where its cinematic narrative quality comes from.

Even more surprising is its strong character performance. In one example, a girl leaning back with folded arms shows smooth and natural body language, without any mechanical stiffness. Micro-expressions stand out: a boy raises his eyebrows in surprise, avoids eye contact, then transitions into a tight-lipped bitter smile — clear emotional layers conveying guilt and awkwardness.

Even the trembling disappointment in the girl’s voice and the boy’s delayed, breathy response match the scene perfectly. This kind of smooth multi-character dialogue flow significantly reduces the usual stiffness seen in AI, bringing performance closer to real-life filming.

HappyHorse AI Understanding and Multi-Shot Storytelling

happyhorse also shows strong semantic understanding and instruction-following ability. With just a simple text prompt, it can automatically handle multi-shot scheduling and storyboard composition.

For example, given a nine-panel storyboard and a prompt, it demonstrates impressive comprehension. It can directly output a 10-second cel-shaded animation with accurate plot capture and fully realized visual style.

That said, in the final seconds, there are still slight differences between the background and the original reference image when the cat-girl sheaths her sword.

HappyHorse Styles: From Anime to Traditional Chinese Aesthetics

And yes, happyhorse can handle a wide range of styles.

From neon-drenched Hong Kong cinema and grainy retro film textures, to the healing freshness of Hayao Miyazaki-style animation and the lively charm of Pixar-like 3D; from the blank-space aesthetics of ink wash painting to the handmade feel of origami and clay — it reproduces them all with precision.

One of its strongest areas is narrative-driven scenes. Whether it’s ancient-style farewell scenes, solitary drinking under the moon, or a scholar writing in contemplation, the emotional intensity is rich and full.

Take a scene of a young swordsman dancing under peach blossoms — from camera movement rhythm to detail, from color atmosphere to emotional delivery, it reaches near film-level completion. The youthful feeling is captured just right.

We even had it generate a Chinese-style animation similar to the scene in Chang’an 30,000 Miles where Li Bai writes “Jiang Jin Jiu.” The result carries both the grandeur of the Tang dynasty and the lonely desolation of the poet — an almost extreme restoration of classical artistic mood.

Its AI-generated Chinese-style animation truly brings cultural relics “to life,” and not just visually, but with soul. Tang sancai camels blink, Sanxingdui masks subtly move their mouths, Dunhuang flying apsaras float out of murals with flowing ribbons and weightless grace.

Still, with a critical eye, there are imperfections — for example, when the apsaras rise into the air, arm movements can feel slightly unnatural. Sometimes multiple attempts are needed for ideal results.

Action Scenes and Dynamic Performance in HappyHorse

Notably, happyhorse shines in action scenes as well.

In a generated duel between two swordsmen, the moves are fierce and sharp, fully expressing that cold, intense Eastern aesthetic.

Or take a high-speed racing sequence — the tension of speed and adrenaline is pushed to the extreme, genuinely thrilling.

HappyHorse Video Editing: Edit Videos with One Sentence

Beyond generation, the second ace of happyhorse is video editing.

It offers two editing modes: V2V natural language editing and SV2V reference image editing.

The core of V2V is simple — “edit video with one sentence.” No need to open professional editing software. Just describe what you want to change, and the AI understands and executes it.

SV2V allows you to upload a reference image and seamlessly replace the subject in a video — letting your favorite characters appear in your scenes.

For example, in an original clip of a girl running along a country path, by adding a style reference image, HappyHorse instantly transforms it into a “Ghibli-style” visual, with soft colors and lighting in every frame.

You can even swap the subject entirely — turn a dog into a cat — and change the background music, all in one go.

Even fine adjustments are simple: “change the amber liquid in the video to a pink-purple gradient with a starry effect.”

HappyHorse in the AI Video Race: A New Powerful Player

Looking at the bigger timeline, the launch of happyhorse comes at a key moment in the AI video space.

On March 25, OpenAI officially shut down Sora’s full service. Once considered the “ceiling” of AI video, it exited due to high compute costs and commercialization challenges.

But instead of cooling down, the global AI video race has become even more crowded — Google’s Veo 3.1 and xAI’s Grok Imagine are competing fiercely, while Chinese players are rising collectively.

What’s interesting is how HappyHorse entered the market. Instead of a high-profile launch, it chose a “prove with results first, reveal identity later” strategy.

Still, no matter how good the story is, what determines how far it runs is the product itself.

HappyHorse-1.0 pushes forward on several key fronts:

- Visual quality that no longer screams “AI”

- Narrative ability where one prompt supports a full multi-shot story

- Audio-visual synchronization as a native part of generation

- Editing capability where natural language replaces professional tools

HappyHorse Pricing: High Value for Creators

For most creators, the most practical signal is cost-effectiveness.

The happyhorse official site offers ready-to-use creative templates and community presets, making it easy to get started. Pricing is also competitive — new users get free credits.

Standard pricing:

- 720P: 0.9 RMB/sec

- 1080P: 1.6 RMB/sec

With subscription discounts:

- 720P: as low as 0.44 RMB/sec

- 1080P: as low as 0.78 RMB/sec

In today’s market, this pricing is quite impactful.

More importantly, HappyHorse has launched a “Top 100 Creator Program,” inviting global AIGC enthusiasts to try it for free.

HappyHorse Future: From Toy to Real Creative Tool

Putting all its capabilities together, along with competitive pricing, happyhorse points toward a clear direction: AI video is shifting from a “toy” to a “tool.”

In 2026, the competition is no longer about whose technical specs are higher, but about who can truly be used by creators.

With HappyHorse entering the scene, a new possibility appears — delivering film-level quality at an accessible price.

This “happy horse” has just started running.