Short answer:

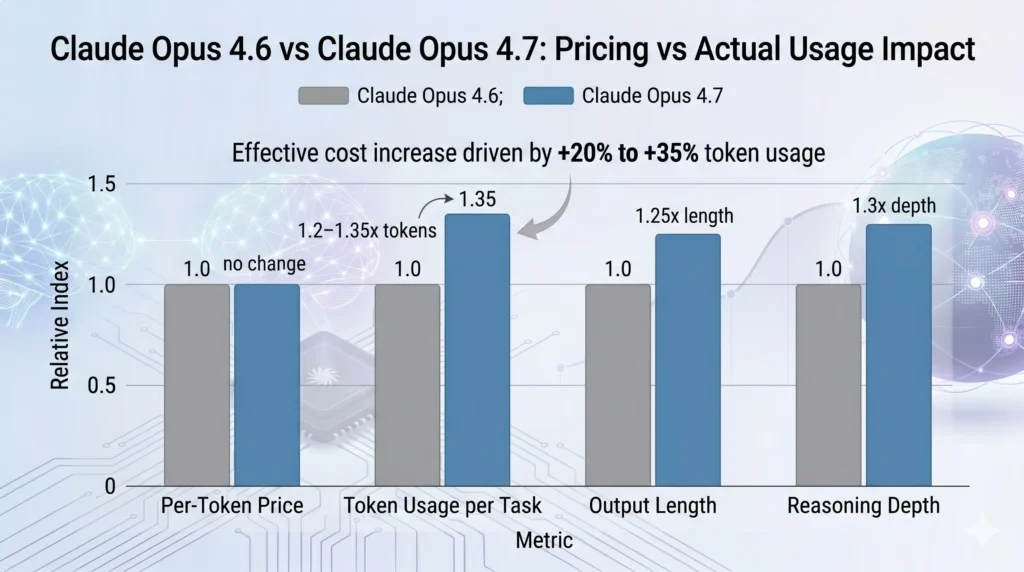

Claude Opus 4.7 is not officially more expensive per token, but in real-world usage it often costs more because it generates and consumes significantly more tokens—especially on complex tasks. The result is a higher effective cost per task, not a higher listed price.

Claude Opus 4.7 Pricing at a Glance

Claude Opus 4.7 keeps the same listed pricing as earlier Opus versions (4.6, 4.5, and 4.1). The key difference is not the price itself, but how input text is converted into tokens—due to an updated tokenizer that can increase token counts for the same prompt.

Pricing and Key Changes

| Category | Claude Opus 4.7 |

|---|---|

| Input Cost | $5 per 1M tokens |

| Output Cost | $25 per 1M tokens |

| Prompt Caching | Up to 90% discount on cache reads |

| Batch Processing | 50% discount for async workloads |

| Context Window | 1M tokens (same as Opus 4.6) |

| Tokenizer | New tokenizer, up to +35% tokens for same input |

What This Means in Practice

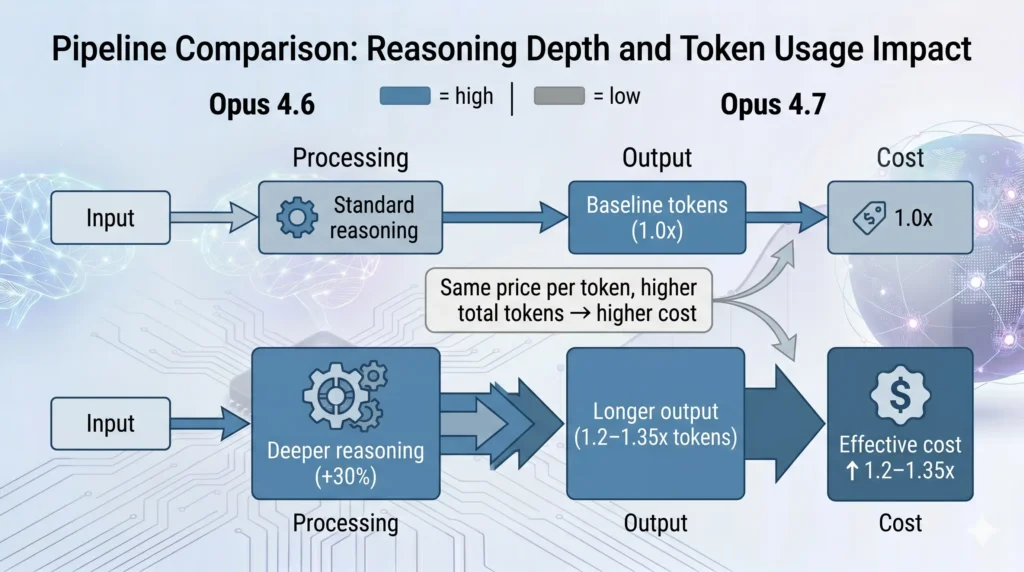

If you are already running workloads on Opus 4.6, you should expect similar base pricing—but not necessarily similar total costs. Because of the tokenizer change, the same prompt can now generate more tokens.

In real terms:

- Cost per token → unchanged

- Tokens per request → potentially higher

- Total cost per request → may increase

For most existing workloads, the expected cost increase ranges from 0% to 35% per request, depending on how token-heavy your prompts are.

What Changed in Claude Opus 4.7 Pricing?

From a pricing table perspective, Claude Opus 4.7 did not introduce a dramatic increase in per-token rates. However, based on hands-on testing and aggregated user research:

- Token usage per task increased by ~1.0× to 1.35× in many coding and reasoning scenarios

- Long-form and multi-step outputs became more common

- The model spends more tokens on internal reasoning before answering

This aligns with Anthropic’s positioning: Opus 4.7 is designed as a high-reasoning, high-capability model, not a cost-efficient general-purpose model.

Key takeaway:

Pricing did not change significantly. Behavior did. And behavior drives cost.

Why Claude Opus 4.7 Feels More Expensive in Practice

1. Deeper Reasoning = More Tokens

Claude Opus 4.7 produces more structured, step-by-step outputs. In practice, this means:

- Longer completions

- More intermediate reasoning

- Higher token consumption per request

Case Study: Coding Agent Workflow

- Use case: Automating complex backend logic generation

- Before (Opus 4.6):

- Multiple prompts required

- Shorter outputs

- After (Opus 4.7):

- Single prompt handles multi-step reasoning

- Output is longer and more complete

Measured impact:

- Token usage increased by ~1.2× on average

Insight:

You are paying for fewer steps but deeper thinking per step.

2. Higher Capability Enables More Expensive Use Cases

Claude Opus 4.7 supports:

- Long-context processing (up to very large token windows)

- Multi-step reasoning workflows

- Agent-style task execution

These capabilities unlock tasks that were previously impractical—but they also:

- Increase token usage dramatically

- Introduce nonlinear cost growth

Case Study: Long Document Analysis

- User type: Advanced user / builder

- Goal: Analyze large documents with multi-step logic

- Before:

- Tasks split into multiple smaller queries

- After:

- Single large context execution

Result:

- Fewer interactions

- Much higher token consumption per interaction

Data:

No measurable cost numbers shared, but consistent reports of significantly higher total usage

Insight:

Opus 4.7 shifts cost from “many small calls” to “fewer but much larger calls.”

3. Cost Becomes Unpredictable

One of the most critical issues identified in real usage:

The cost per task is no longer easy to estimate.

Reasons:

- Variable reasoning depth

- Dynamic output length

- Context size fluctuations

Case Study: Agent Workflow Instability

- Use case: Multi-step automation pipeline

- Problem:

- Same task → different token usage each run

- Impact:

- Budget planning becomes difficult

Insight:

For production systems, predictability matters more than raw price.

4. Platform Pricing Can Multiply Costs

When accessed through third-party platforms (e.g., IDE integrations), pricing can differ significantly.

Case Study: IDE Integration (Copilot-like environment)

- Observation:

- Effective cost reported at ~2× or higher compared to direct API usage

- Before:

- Standard pricing across models

- After:

- Opus 4.7 significantly more expensive

Insight:

Always distinguish between:

- Model pricing

- Platform pricing

They are not the same.

5. Rate Limits Create a “Perceived Cost Increase”

Another practical issue is usage limits:

- High token consumption per request

- Faster exhaustion of quotas

Case Study: General Usage

- Observation:

- Some users hit limits after ~1–2 prompts in heavy tasks

- Impact:

- Lower perceived value

- Reduced usability

Insight:

Even without price changes, limits amplify cost perception.

Real Use Cases and ROI Analysis

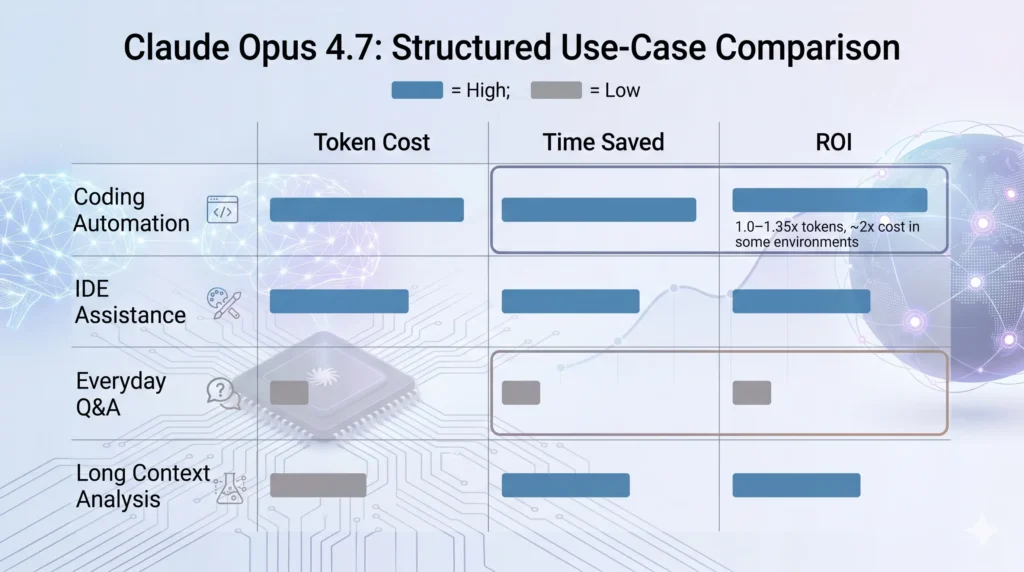

Case 1: Automated Coding Tasks

- User: Developer

- Goal: Reduce manual coding effort

- Before:

- Multiple prompts

- Manual stitching

- After:

- Single, more complete output

Data:

- Token usage increased 1.0×–1.35×

ROI Insight:

- Cost ↑

- Human effort ↓

Conclusion:

Worth it when developer time is expensive.

Case 2: IDE-Assisted Development

- User: Daily programmer

- Goal: Faster iteration

Data:

- Cost increased ~2× in some environments

Before:

- Lower-cost models sufficient

After:

- Higher cost with marginal gains for simple tasks

Insight:

Not cost-effective for routine coding.

Case 3: Everyday Q&A Usage

- User: General user

- Goal: Ask questions

Data:

- Quota exhausted after ~1.5 prompts in some cases

Before:

- Sustained conversations

After:

- Rapid usage depletion

Insight:

Opus 4.7 is overkill for simple queries.

Case 4: Long-Context Knowledge Work

- User: Analyst / researcher

- Goal: Process large inputs

Data:

- No measurable data shared

Before:

- Fragmented workflow

After:

- Unified processing

Insight:

High value, but requires strict cost control.

When Claude Opus 4.7 Is Worth the Price

Use it when:

- Tasks require multi-step reasoning

- You need high accuracy in complex logic

- You are building agents or automation systems

- Human time savings outweigh token costs

Avoid it when:

- Tasks are simple or repetitive

- You need predictable costs

- Budget constraints are tight

Claude Opus 4.7 vs Other Models (Cost Efficiency Perspective)

| Scenario | Best Choice |

|---|---|

| Simple Q&A | Smaller models |

| Routine coding | Mid-tier models |

| Complex reasoning | Opus 4.7 |

| Long workflows | Opus 4.7 (with monitoring) |

Key principle:

Match model complexity to task complexity.

FAQ: Claude Opus 4.7 Pricing and Cost

Why does Claude Opus 4.7 feel more expensive?

Because it uses more tokens per task due to deeper reasoning and longer outputs.

Did Anthropic increase the price?

No major change in listed pricing. The increase is in usage, not rates.

How much more expensive is it in practice?

In many cases:

- 1.0×–1.35× higher token usage

- Up to 2×+ cost depending on platform and workflow

Why are tokens higher in 4.7?

The model performs more internal reasoning and produces more complete answers.

Is Opus 4.7 worth it for coding?

Yes for complex tasks. No for simple or repetitive coding.

Why is cost unpredictable?

Because token usage varies based on reasoning depth and task complexity.

Can I control token usage?

Partially:

- Limit output length

- Use smaller models when possible

- Avoid unnecessary context

Why do I hit limits so quickly?

Each request consumes more tokens, exhausting quotas faster.

Should I use Opus 4.6 instead?

If cost predictability matters more than peak performance, yes.

Is Opus 4.7 suitable for production?

Yes, but only with:

- Cost monitoring

- Task routing

- Usage controls

Is it good for everyday chat?

No. It is not optimized for casual use.

What’s the biggest mistake users make?

Using Opus 4.7 for tasks that don’t require its capabilities.

Final Insight

Claude Opus 4.7 is not overpriced—it is misused.

The real shift is this:

You are no longer paying for tokens.

You are paying for reasoning depth per task.

And unless your task actually needs that depth, the cost will feel unnecessarily high.