Just today, this news flooded the entire internet. NVIDIA used AI in chip design to build GPUs—a task that originally required 8 senior engineers working for 10 months was completed overnight. At the recent NVIDIA GTC, a peak-level conversation between Chief Scientist Bill Dally and Google Chief Scientist Jeff Dean revealed this shocking fact.

Right now, this YouTube talk has already been watched by tens of thousands and received strong praise online. In the long history of the semiconductor industry, Moore’s Law was once an unbreakable truth. But as physical limits approach, the complexity of developing a flagship GPU has grown exponentially. Now, AI in chip design almost makes human engineers step back to the sidelines?

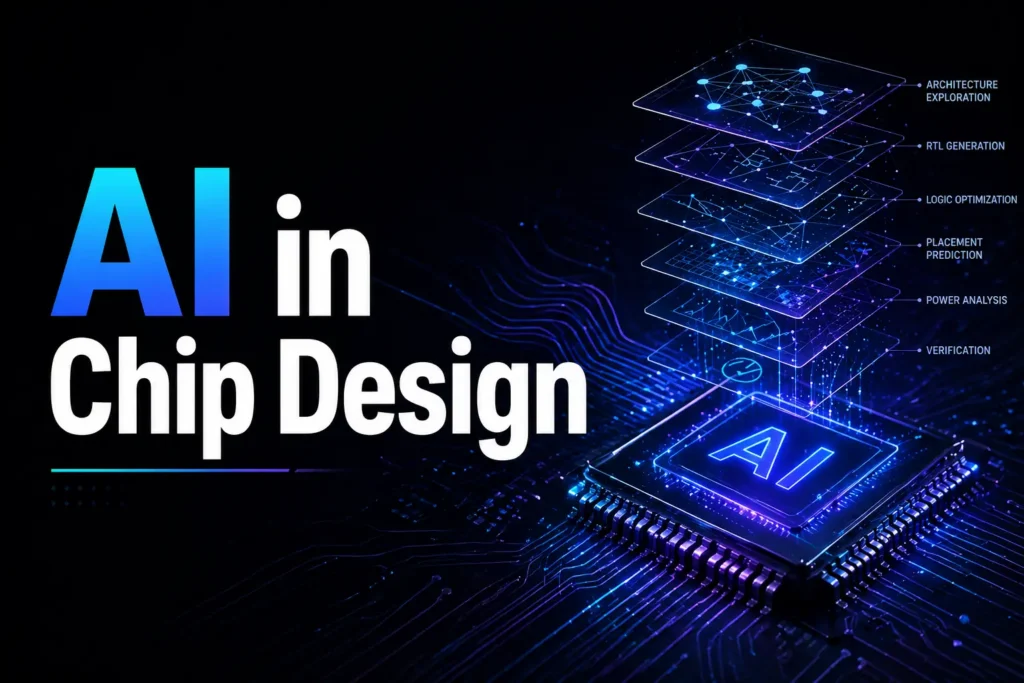

AI in Chip Design: From “80 Person-Months” to “One GPU Overnight”

In traditional chip design workflows, migrating the Standard Cell Library is an extremely tedious and labor-intensive task. Every time TSMC or Samsung introduces a new semiconductor process (like moving from 5nm to 3nm), NVIDIA must re-adapt its base library of about 2,500 to 3,000 cells to the new process.

Bill Dally revealed that in the past, this task required a team of 8 senior engineers working continuously for 10 months, costing a total of 80 person-months. But after AI stepped in, everything was overturned.

Now, NVIDIA has developed a reinforcement learning-based tool—NB-Cell. Just by inputting requirements into the system, a GPU can complete the entire migration overnight. During this process, NB-Cell continuously explores hundreds of millions of design combinations through trial-and-error and self-optimization in a very short time.

What’s striking is that the AI-generated cells, in key metrics such as Area, Power, and Delay, not only reach human-level performance but in some cases surpass manual human designs. This “overnight delivery” capability means NVIDIA can validate new processes earlier than competitors, keeping a leading position in the hardware race.

AI in Chip Design: Prefix RL and “Non-Human Intuition”

AI in Chip Design Breaking Logic Design with Prefix RL

If NB-Cell solves repetitive labor, then Prefix RL shows AI’s creativity in complex logic design. In a chip’s Arithmetic Logic Unit (ALU), the placement of the Carry Lookahead Chain has been a classic problem studied for decades.

Human engineers rely on experience and intuition for layout, often hitting a performance ceiling. But the Prefix RL system produced a completely different answer.

Dally described the AI-generated layouts as “strange designs that humans would never think of.” These designs go against traditional electronic engineering aesthetics, yet in performance they improve by about 20% to 30% compared to the best human designs.

This marks a turning point: AI in chip design is no longer just assisting humans—it is pushing beyond human cognitive boundaries, searching for “optimal solutions” hidden in millions of dimensions.

AI in Chip Design: Chip Nemo as a Silicon Mentor

Inside NVIDIA, mismatch in human resources used to be a major pain point. Senior designers often spent large amounts of time guiding juniors, explaining how specific hardware modules (RTL) work.

To free up core productivity, NVIDIA developed internal large language models—Chip Nemo and Bug Nemo.

Unlike general-purpose LLMs on the market, these models are fine-tuned on NVIDIA’s proprietary architecture documents, RTL code, and hardware specifications accumulated over decades. After private training, they become “experts who understand NVIDIA GPUs best.”

Junior engineers no longer need to interrupt busy senior engineers when facing complex module designs—they can directly ask Chip Nemo. It acts like a very patient mentor, explaining GPU working principles step by step.

Bug Nemo, on the other hand, aggregates error reports and automatically assigns bugs to the most suitable engineers or modules, greatly shortening the chip verification phase—the “long-distance race” stage.

AI in Chip Design: Can AI Fully Design Chips on Its Own?

Despite a hundredfold increase in efficiency, Bill Dally remained extremely clear-headed and restrained in the discussion. He explicitly pointed out that fully end-to-end automated chip design—where you simply say “design me a new GPU” and AI outputs a complete blueprint—is still “a long way off.”

At present, AI in chip design plays more of an “Augmented Design” role rather than autonomous chip creation.

There are three key limitations:

AI in Chip Design Still Needs Human Architecture Decisions

High-level architectural decisions still rely on human expertise.

AI in Chip Design Still Needs Creative Circuits

Creative circuit design and complex logic structures are still led by humans.

AI in Chip Design Still Faces Verification Limits

Design verification is still the longest “pole” in the process. AI can assist acceleration, but cannot fully close the loop.

In other words, framework-setting tasks—such as top-level architecture, cross-module coordination, and key decisions—remain firmly in human hands. Also, although AI can speed up verification, final simulation and real-world testing are still necessary to ensure chips work flawlessly in physical reality.

AI in Chip Design: Human + AI Workflow

NVIDIA’s practice shows that AI is not replacing engineers—it is reshaping how engineers work.

Junior engineers use Chip Nemo to learn complex modules independently, reducing interruptions to senior staff. Senior engineers are freed from repetitive tasks and can focus on higher-value innovation and decision-making.

Across the workflow, AI handles large-scale search, optimization, and verification, while humans define goals, constraints, and creative direction.

This is essentially a collaborative model of “human sets the framework + AI executes at high speed.”

Dally envisions a future with “multi-agent” models, where different specialized AI systems handle different design stages, collaborating like functional teams today.

The long-term goal remains end-to-end automated design, but challenges such as verification, interface negotiation, and dynamic adjustment still need to be solved.

Current progress already allows NVIDIA to iterate next-generation hardware faster, becoming an important support for sustaining Moore’s Law.

AI in Chip Design: Engineers Won’t Be Replaced—Yet

When 10 months of work by 8 engineers is replaced by one night on a GPU, we have to face a harsh reality: mediocre, labor-intensive engineering work is rapidly depreciating.

NVIDIA is building an AI-driven technological barrier. While competitors are still trying to catch up by adding manpower, NVIDIA has already entered a self-reinforcing system of “AI designing AI, AI optimizing AI.”

This kind of efficiency advantage is exactly why it can release a new flagship graphics card every year.

For chip engineers, this is both a crisis and an opportunity. Humans are being freed from tedious wiring and cell migration, forced to evolve toward higher-level architectural thinking and more complex creative decisions.

AI in Chip Design: A New Era of Silicon Creation

In this new era of silicon-based chip design, computation is no longer just the purpose of chips—computation has become the very origin of how chips are created.